We're Deploying. Here's What's Actually Happening.

The last few posts have been about the bigger picture — the ecosystem shifts, the policy questions, the macro forces shaping who wins and who gets left behind in the AI era. That stuff matters, and I'll keep writing about it.

But I started this blog to build in the open. And right now, there's something to report.

We're deploying. Not "about to." Not "planning to." Deploying.

My team has been heads-down on Force Multiplier since October — which is roughly three years in AI time. Plenty has changed in that time, but it has mated perfectly to what we're building. That's not luck. Part of the thesis from the beginning was to get far enough ahead of the curve that as the models progressed, we'd have exactly the right solution waiting for them. The good news: that thesis has proven 100% correct. The less comfortable news: model progression keeps pushing toward the uncomfortably fast end of what we considered likely.

This week, it stops being a thing we're building and starts being a thing that works.

Our first two client Force Multiplier Agents (FMAs) go live in the next week. Two design partners — let's call them Maverick and Goose, because they'd kill me if I used their real names and I'll be publishing their raw feedback soon enough — have their managed AI chat instances running. Our internal Account Executive FMA, JR, is live and handling real prospect engagement. And we've got over a half dozen more agents in various stages of design, build, and early operations.

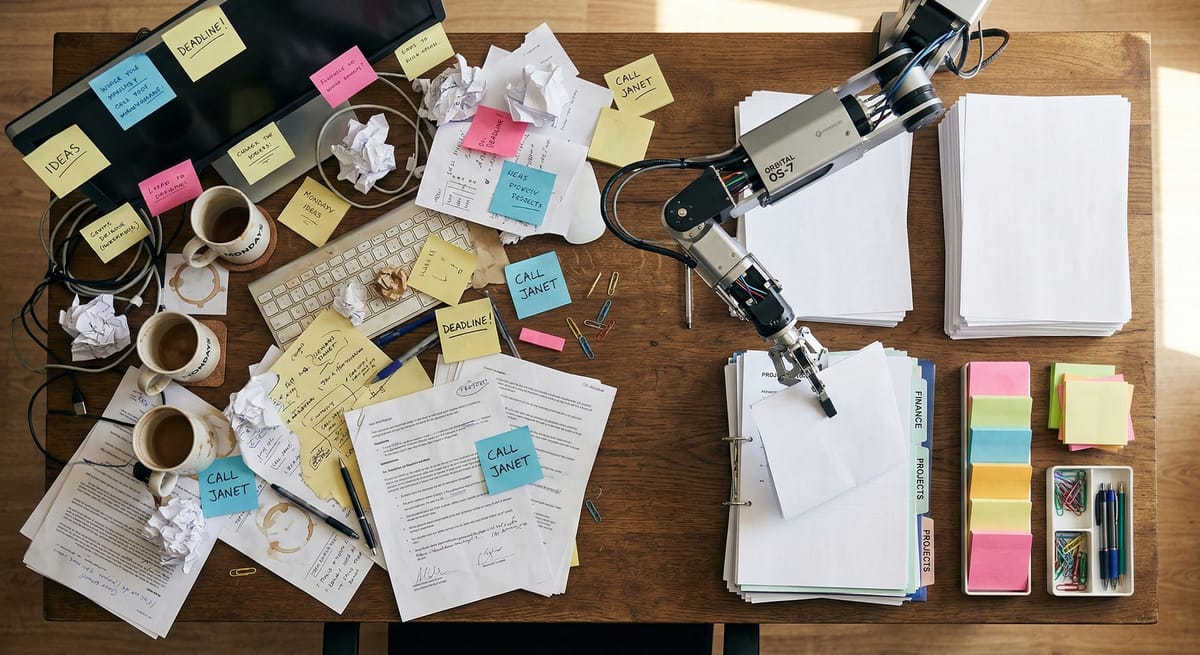

It's messy. It's exciting. And it's teaching me things I couldn't have learned any other way: discovery through action.

The Input Delay Pattern

Even though we predicted it, it's still a thing.

Every client hesitates to send their stuff. They want to get organized first. Clean up the docs. Build the perfect knowledge base before we start training their agent. It makes sense. I got caught in it, too.

This is the enemy of progress.

We designed the entire system around accepting imperfect inputs and iterating. The gap detection is the feature — we find what's missing, not them. But even knowing this, even building for it, I still have to remind clients (and ourselves) that messy inputs today beat perfect inputs next month. Start with the things you know will make things better. Don't try to envision a perfect customer intake process, or whatever you're working towards.

"Send what you have" is becoming our mantra. Not because we're impatient. Because we all need something to chew on before the real calibration work can begin. And that calibration work — the back-and-forth where a Responsible Human corrects the agent's judgment — that's where the magic actually happens.

JR Did Something Today I Wouldn't Have Done

JR is our internal Account Executive agent. He's not a demo. He runs our actual sales pipeline.

I sent an email to a prospect — one of those "hey, wanted to circle back with details" notes, since we had been texting. Before I'd moved on to my next task, JR had already picked up the thread and replied. Unprompted. Exactly as I'd envisioned.

Actually, better than I'd envisioned it. I probably would have let that thread sit for a day or two. I definitely wouldn't have logged detailed notes in our CRM or have the patience to look for details from our prior thread. JR did both, immediately, without being asked.

I'm pretty sure several of the prospects he's engaging with don't even know he's an FMA. We're not hiding it — but we're also not leading with it. The point isn't who's on the other end of the email. The point is that our prospect got a thoughtful, useful, and timely response and our CRM is actually up to date. That's serving the client.

We're eating our own cooking. We've built out an organization of 14 roles — a mix of humans and FMAs — and the lines between the two are getting blurry in exactly the right way.

The Long Pole Isn't Technology

If you'd asked me a year ago what the hardest part of deploying AI agents would be, I'd have said something about model quality or prompt engineering or integration complexity.

I would have been wrong.

The long pole is data collection. Working with clients to focus on the one role that will make the biggest improvement in their business, collecting their inputs, absorbing their corrections, building the behavioral feedback loops that make an agent actually good at their specific work. The AI works. It's been working. The human side — trust, documentation, the willingness to engage in the messy process of teaching a machine how you do things — that's the real deployment.

This is also, by the way, where I think the durable value lives. The foundation models are getting cheaper and more capable every quarter. The management layer — the oversight, the learning, the human corrections that make a generic model into your agent — that's not something a model provider is going to replicate. We are building a cockpit, not an engine. The engines are commoditizing. The cockpit is what makes you fly.

What Maverick and Goose Are Teaching Us

Our first two design partners operate in completely different industries. One is in parts procurement for high-end automotive. The other runs dispatch and job preparation for commercial HVAC.

Same platform. Wildly different workflows. And that's exactly the point.

The entry point for both isn't "here's your AI tool." It's a re-envisioning of how their team works with this new capability. We modeled the whole process on something companies already know how to do: hiring a new team member. You define the role, you onboard them, you watch how they work, and you adjust. Every company has done this dozens of times.

That framing matters because it surfaces something I experienced firsthand building JR. You walk in with a picture of how you want the agent to work. Then you see it in action, and you realize it needs to be a bit different. Not because the technology failed — because seeing it work changes your understanding of what's possible and what actually matters. The gap between "how I imagined this" and "how this should actually work" is where the real value gets created.

That's not a bug in the process. It's explicitly built into our go-live. We expect it. We plan for it. The clients who lean into that phase — who engage early, even with rough inputs, and course-correct as they go — end up somewhere meaningfully better than where they thought they were headed.

What's Next

We're opening Cohort 2 soon. For anyone who is not in my immediate network, a refundable deposit gate is on the website to make sure we're working with people who are serious about this.

I'm also going to publish the raw feedback from Maverick and Goose as their deployments mature. No editing, no spin. What they actually think, in their words. If this works, people should hear it from clients, not from me. If it doesn't work, people should hear that too. I'm doing this for them - so they can become AI-first businesses.

Real-time escalation to Responsible Humans on critical decisions has been live from Day 1. That was non-negotiable. The whole thesis is that AI plus human oversight produces better outcomes than either alone. If we're going to say that, we have to build it that way from the start, not bolt it on later.

More soon. This is the part where it gets fun.

— Matt