The Market Is Real. Here's Why They Won't Win It.

Part 1: The Management Layer

I believe AI employees are coming to every serious business within the next two years. Not chatbots. Not copilots. AI that does real work — collections, sourcing, customer communications, documentation — with a human directing the operation and a management layer that keeps it honest.

I believe the companies that weld their operations to a single AI model provider — their architecture, their tools, their engineers, their roadmap — will wake up in three years locked into a dependency they can't exit. Just like Google Ads. Just like Salesforce. Just like AWS. Except faster and deeper, because AI agents don't sit in a dashboard — they embed into the operational core of your business. And every correction your team makes, every judgment call they contribute, every piece of institutional knowledge they teach the system, gets captured inside that provider's ecosystem.

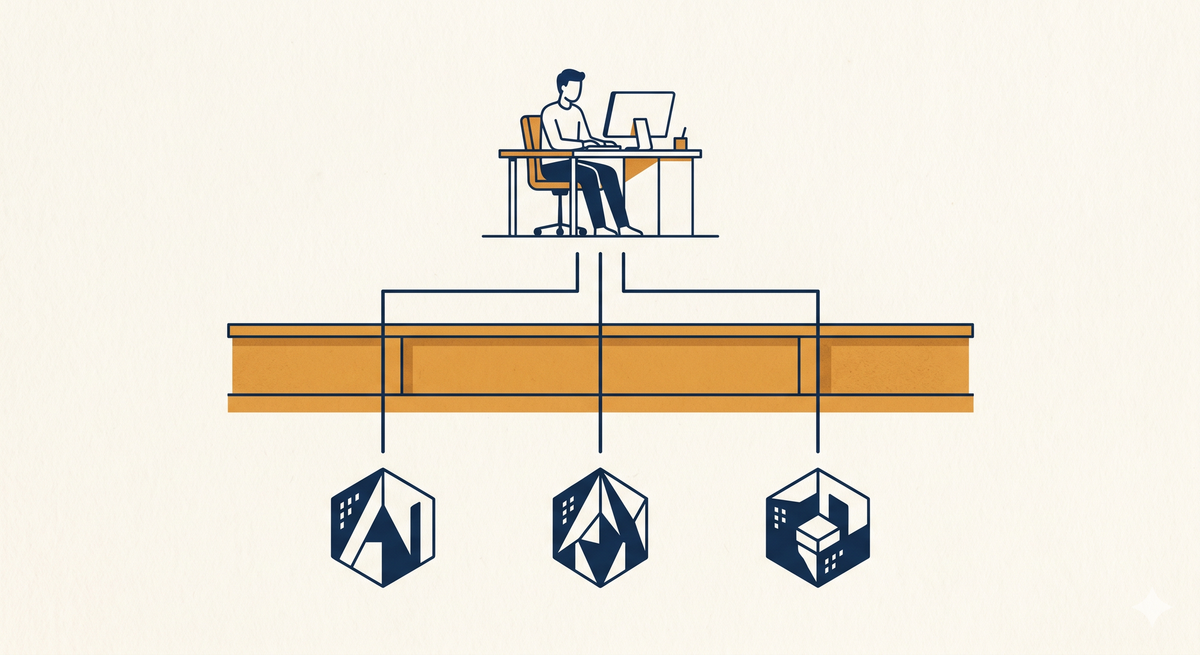

I believe the right architecture keeps humans in the loop, lets you swap models when the landscape shifts, and ensures that the intelligence your business creates works exclusively for you — not for the platform provider, not for their investors, and not for their next product launch.

And I believe this because I've been building it for six months, in public, on this blog, with real clients in production — and it's working.

On May 4th, Anthropic and OpenAI confirmed I'm right about the market. Now let me tell you why they're wrong about the approach.

Anthropic and Blackstone announced a $1.5 billion joint venture to embed Claude engineers inside mid-market companies. Hours earlier, OpenAI finalized "The Deployment Company" — a $10 billion vehicle backed by TPG and eighteen other investors, with a guaranteed annual return floor of 17.5%.

Combined: $11.5 billion aimed at putting AI employees inside your business.

Anthropic's CFO said it plainly: "Enterprise demand for Claude is significantly outpacing any single delivery model." The company that built the model is telling you the model alone is not enough. They needed Blackstone's portfolio and Goldman's distribution. OpenAI needed TPG and Bain and Brookfield. The most valuable AI companies in history looked at the gap between what their models can do and what businesses actually need — and concluded it was an $11.5 billion problem.

They're right about the gap. They're wrong about who should fill it.

Look at who's doing the filling. OpenAI lists Bain & Company, McKinsey, and Capgemini as consulting partners. Anthropic's partner network includes Accenture, Deloitte, and PwC.

I used to be an executive at Accenture. I know exactly what these firms are good at and what they aren't. They are excellent at organizational restructuring (telling executives which headcount to cut and how to communicate it), and OK-ish at technology. They are not good at understanding the operational reality of a 75-person commercial HVAC company, or a regional logistics firm, or a specialty manufacturer. They don't know which collections person has fifteen years of institutional knowledge in her head. They don't know about the shared computer with the bookmarks. They have no muscle memory for valuing the human expertise that makes AI deployment actually work, because their business model has always been about replacing cost with efficiency, standardizing, documenting, and analyzing, not amplifying the people who hold the knowledge.

The single most important thing I've learned deploying AI employees into real businesses is that the bottleneck is never the AI. It's the discovery process — the deep, patient work of understanding what your best people actually do and why, so the AI can be designed to work with their judgment, not despite it.

Now picture McKinsey doing that work. Picture them sitting with your best employee for three days, learning the subtleties of how she handles an escalation with a difficult contractor - humbling themselves to the reality of how businesses actually generate value. Imagine the assurances from the consultant that their role is secure, all while their peer just finished cutting the recruiting department headcount by half.

The consulting model can't do this work because the employee knows what the consultant is there for.

Both JVs follow the same structural playbook. The model provider's engineers and the consulting firm's change managers come into your business. They deploy the model provider's technology into your core processes. The institutional knowledge your best people have spent years developing gets encoded into the model provider's architecture, formatted for the model provider's tools, optimized for the model provider's models.

When Anthropic's JV needs to show returns to Blackstone, whose interests does Claude serve — yours or the consortium's? When OpenAI guarantees TPG 17.5% annually for five years, where does that return come from?

It comes from you.

And there's a dimension to this that's easier to miss. When you build on a provider's platform, you inherit their roadmap. The day after announcing the Blackstone JV, Anthropic launched ten pre-built AI agents for financial services: pitchbook creation, KYC screening, valuation review, general ledger reconciliation. FactSet's stock dropped 8% in a single session. The market drew a straight line: if Claude can do what those companies sell, the providers are at risk.

Now imagine you're a financial services firm that spent six months building your own AI-powered diligence workflow on Claude's platform. You tuned it. Your team trained it. And then your platform provider launched a competing product — using everything they learned from firms like yours, on infrastructure they control, with capabilities they can reprice at any time.

It's not just "what if prices go up." It's "what if the platform decides to do what I do, and more?"

A smart investor named Yishan posted a thesis last year that went viral: every AI application startup gets crushed by foundation model providers expanding into the application layer. The ground never stabilizes. The model providers are the only ones who survive the sea changes they themselves are driving.

He's right — with one exception. The exception is when the value isn't in the model.

The model providers just told you this themselves. If the model alone were sufficient, they wouldn't need $11.5 billion in PE capital and consulting distribution. They need it because deploying AI into a real business requires a layer they don't provide and structurally never will: the management layer. Oversight. Audit. Organizational fit. The human judgment about which tasks the AI handles, which it escalates, and who's accountable when something goes wrong.

That's what I've been building. And neither JV is designed to provide it — because providing it would conflict with what they're actually designed to do, which is distribute one provider's technology as deeply as possible into your operations.

$11.5 billion has been announced. Press releases have been issued. Consulting partnerships have been signed. But as of today, neither JV has published a case study of an AI employee running in production inside a client's business. They are describing what engagements will look like. We're running them.

Our design partner clients have been operating Force Multiplier Agents in production for over a month. One client cut their parts sourcing labor by half. Another is seeing material decreases in diagnosis time and improvements in first-visit fix rates — the kind of operational metrics that directly hit the bottom line. These aren't demo numbers. They're production numbers from real businesses with real customers on the other end.

You'll hear directly from these clients in my next post.

Right now, we're ahead on the real work, but that won't last forever. Which is why our approach matters more than the head start.

What does the alternative look like today?

Model independence. Your AI employees work with whatever model is best for each task and migrate when something better arrives. We use Claude heavily today. If something surpasses it tomorrow, our clients' operations migrate without rebuilding. That's not an aspiration — that's how the system works.

Oversight that survives. One of my early posts was about Summer Yue — Meta's head of AI safety — whose emails got deleted by an autonomous agent because she didn't have the right oversight layer. If it happens to the head of AI safety at the company that built the model, it will happen to your business. Oversight has to be architecturally external — a management layer that persists regardless of what the agent is doing. Who watches the AI when the engineers go home?

Your intelligence works exclusively for you. It doesn't train someone else's model. It doesn't get shared across a consortium's portfolio. The value your people create by teaching the system stays walled off to you. We're not claiming we've solved data sovereignty at every layer — that's a bigger architectural problem, and I'll get to it. What we've built today is a structure where your operational intelligence can't be leveraged against you. That's the floor, not the ceiling.

Now here's the part where I have to be honest, because that's the deal I made when I started this blog.

What I just described is real and operational today. It works. But it's not the whole picture.

The full vision for sovereign intelligence is bigger than a management layer. It's an architecture where the intelligence your business creates compounds for you permanently — not just protected by contract, but structured so that the value flows back to the people and businesses that generate it. Where the humans who drive AI adoption share in the economic value they create, instead of training themselves out of a job. Where your data doesn't just stay private — it becomes an asset that appreciates.

I'm not done building that. It's the reason I'm doing this at all. But I won't pretend the whole architecture is shipping today when it isn't. What's shipping today are operational Force Multiplier Agents working under our management layer — and this alone is enough to answer the question these JVs just put on the table.

The question isn't whether AI employees are coming to your business. The question is: when they arrive, who do they work for?

The JVs have one answer. The model provider's engineers build the model provider's AI into your workflows, and the model provider's investors capture the return.

I have a different answer. Your AI employees work for you. The oversight is yours. The intelligence they develop works exclusively for your business. You choose the best model for each task — not the one whose parent company funded the deployment. The intelligence your business generates will compound for you in ways that a consulting engagement with a sprinkling of AI never will.

There is a path between handing your operations to the model providers and trying to build everything yourself. It runs through the management layer — the part that keeps the humans in the loop, the data working for you, and the models interchangeable. That layer is what we're shipping today. What comes next is bigger, and I'll show it to you when it's ready.

I'm walking it. I'll keep showing you what I find.

Disclosure: I am an investor in Anthropic and a former Accenture executive. Force Multiplier AI is my company. Client details are always anonymized.

This is Part 1. Part 2 will come when there's more to show.

— Matt